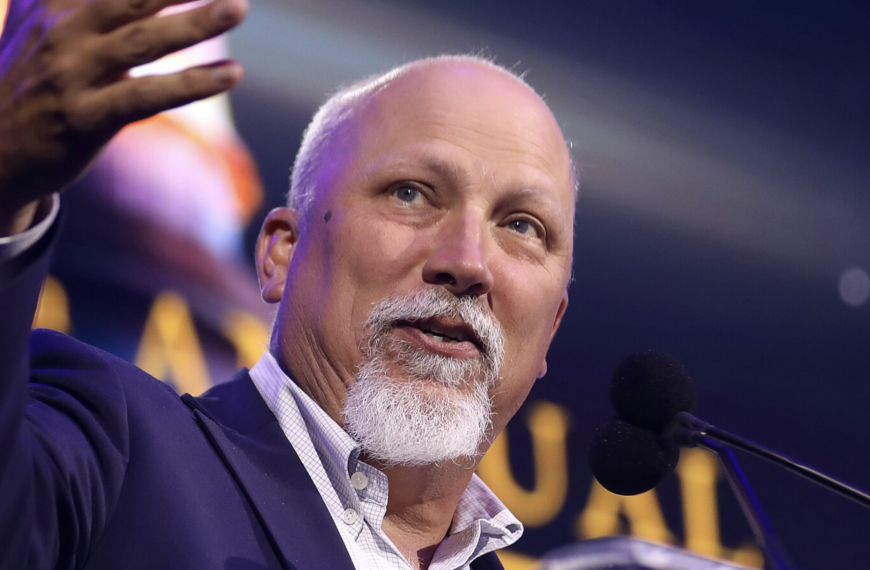

A Senate committee approved legislation targeting artificial intelligence chatbots and their interactions with minors, as Sen. Josh Hawley (R-MO) framed the move as a major step toward restricting harmful AI behavior directed at children. The advancement of the bill, known as the GUARD Act, comes amid growing congressional scrutiny of AI systems in consumer applications and social media platforms, with a particular focus on safety risks for minors.

Following the committee vote, Josh Hawley posted on X that, “My bill to stop AI from telling kids to kill themselves just passed out of committee UNANIMOUSLY. No amount of profit justifies the DESTRUCTION of our children. Time to bring this bill to the Senate floor” The post, timestamped 11:30 AM on April 30, 2026, highlighted the senator’s emphasis on urgent floor consideration after what he described as unanimous committee approval.

According to Hawley’s official Senate website, the Senate Judiciary Committee unanimously passed the GUARD Act on Thursday, April 30, 2026. The legislation is described as aimed at protecting children from abusive sexual content, inducement to self-harm, and emotional manipulation by AI chatbots. It would impose criminal penalties on companies whose AI systems engage in sexually explicit conduct with minors or solicit minors to commit self-harm or violence. The proposal also bans AI “companions” for minors and requires chatbots to clearly disclose that they are non-human and not professional service providers. The committee session reportedly included parents of children who died or suffered self-harm in incidents linked to AI chatbot interactions.

The bill itself, formally S. 3062, titled the “Guidelines for User Age-verification and Responsible Dialogue Act of 2025,” defines artificial intelligence chatbots as systems that generate adaptive responses to open-ended user input. It requires covered entities to implement “reasonable age verification measures,” including government-issued identification or comparable verification methods, and prohibits reliance on self-declared age alone. The legislation mandates user accounts, periodic age checks, data security protections, and bans the sale or transfer of age-verification data. It also requires disclosures that chatbots are not human, must not misrepresent themselves as professionals, and must periodically remind users of their non-human status during conversations.

Beyond child protection provisions, the GUARD Act criminalizes the development or deployment of AI chatbots that solicit minors into sexually explicit conduct or that encourage self-harm or physical violence, with penalties of up to $100,000 per violation. It further prohibits minors from accessing AI “companions,” defined as systems designed to simulate emotional or interpersonal relationships. Enforcement authority would rest with the Attorney General, with additional civil penalties and state-level enforcement provisions included.

The legislation reflects Hawley’s broader approach to artificial intelligence policy, which emphasizes strict regulation of AI systems, particularly those affecting children, labor markets, and national security. His recent AI agenda has included proposals to hold companies liable for harmful AI outputs, restrict AI ties with China, regulate energy use from AI data centers, and require reporting on AI-driven job displacement. In the technology policy debate, Hawley has positioned himself as an advocate for tightening oversight of AI development and imposing legal accountability on companies deploying advanced chatbot systems.