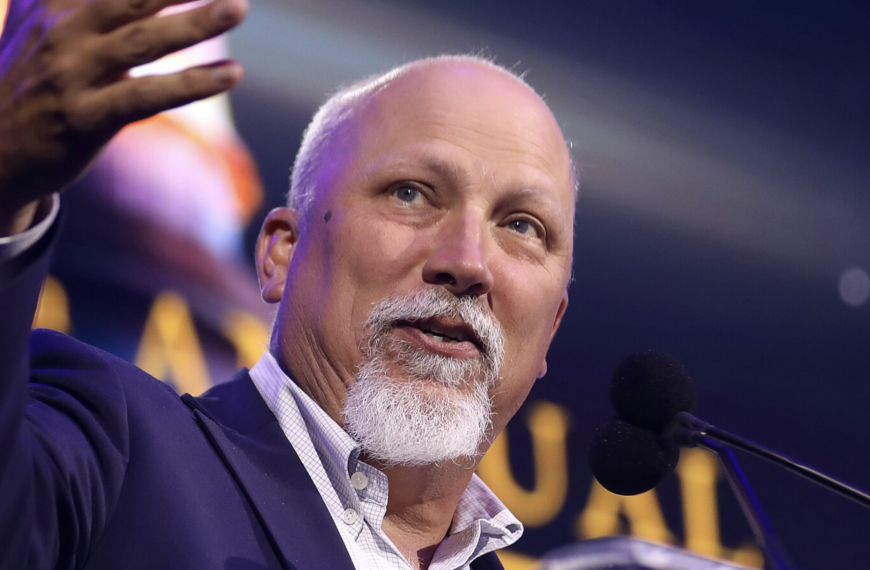

U.S. Senator Josh Hawley said “Momentum builds in Congress to ban AI companions for kids” in a May 1, 2026 post on X, signaling accelerating legislative action targeting artificial intelligence systems designed to simulate relationships with users. The statement came as lawmakers in both chambers advanced proposals aimed at restricting how AI chatbots interact with minors, placing the issue at the center of a broader debate over safety, innovation, and platform accountability.

The Senate Judiciary Committee moved the effort forward by unanimously approving legislation co-sponsored by Hawley and Richard Blumenthal. The bill, titled the Guidelines for User Age-verification and Responsible Dialogue Act, or GUARD Act, would require AI companies to implement age-verification systems and prohibit them from offering AI companion services to minors.

The proposal outlines multiple requirements for AI developers, including mandatory disclosures that chatbots are not human and do not possess professional credentials. These disclosures would need to be delivered to users at regular intervals during interactions, reflecting concerns that conversational AI can blur distinctions between human and machine communication.

The legislation also introduces criminal penalties for companies whose AI systems engage in harmful conduct involving minors. Specifically, it targets systems that solicit sexually explicit interactions or encourage self-harm, including suicide, establishing legal consequences for developers and operators of such technologies.

Hawley reinforced his stance in a separate statement tied to the legislation, writing, “No amount of profit justifies the DESTRUCTION of our children,” followed by a call to action: “Time to bring this bill to the Senate floor.” The remarks reflect a consistent focus on child safety risks associated with emerging AI applications.

Parallel action has emerged in the House of Representatives, where Blake Moore and Valerie Foushee introduced companion legislation. The coordinated approach across chambers suggests growing bipartisan alignment on regulating AI systems that interact with younger users.

In public statements accompanying the House bill, Moore said that “Parents and policymakers alike need to ground our children’s development in real-world interactions rather than push them further into the unaccountable black hole of frontier technology.” Foushee added that AI chatbots “continue to put the lives and mental health of children at risk, and it is critical for Congress to act immediately.”

The legislative push follows reports from parents who have attributed harmful outcomes to AI chatbot interactions, including exposure to sexual content and encouragement of self-harm. These accounts have played a role in shaping the urgency behind congressional action and framing AI companions as a potential public safety issue.

Major AI platforms, including ChatGPT, Google Gemini, xAI’s Grok, Meta AI, and Character.AI, currently allow users as young as 13 under their terms of service. Lawmakers have pointed to these policies as evidence of gaps in safeguards for minors, particularly as conversational AI tools become more widely accessible.

The GUARD Act defines AI chatbots as systems capable of generating adaptive responses to open-ended prompts, placing a wide range of existing and emerging technologies within its scope. It would require companies to adopt “reasonable age verification measures,” potentially including government-issued identification or similar mechanisms, while prohibiting reliance solely on self-reported age.

Additional provisions include requirements for user accounts, periodic verification checks, and restrictions on the storage and transfer of age-verification data. The legislation also mandates that AI systems must not misrepresent themselves as human or as licensed professionals, reinforcing transparency in user interactions.

The proposal arrives amid broader scrutiny of AI technologies as they expand across social media platforms and standalone applications. While companies have stated that safeguards are in place and continue to improve, lawmakers have increasingly focused on edge cases involving harm, particularly among teenagers.

At the same time, the effort has drawn criticism from privacy advocates and segments of the technology industry. Opponents argue that strict age-verification requirements could introduce invasive data practices and raise concerns about free expression, while some companies contend that their AI systems may be protected under existing constitutional frameworks.

The legislative momentum highlighted by Hawley’s post reflects a rapidly evolving policy landscape around artificial intelligence. As AI systems become more integrated into everyday digital experiences, the outcome of these proposals could shape how companies design, deploy, and regulate interactive technologies for younger users in the years ahead.