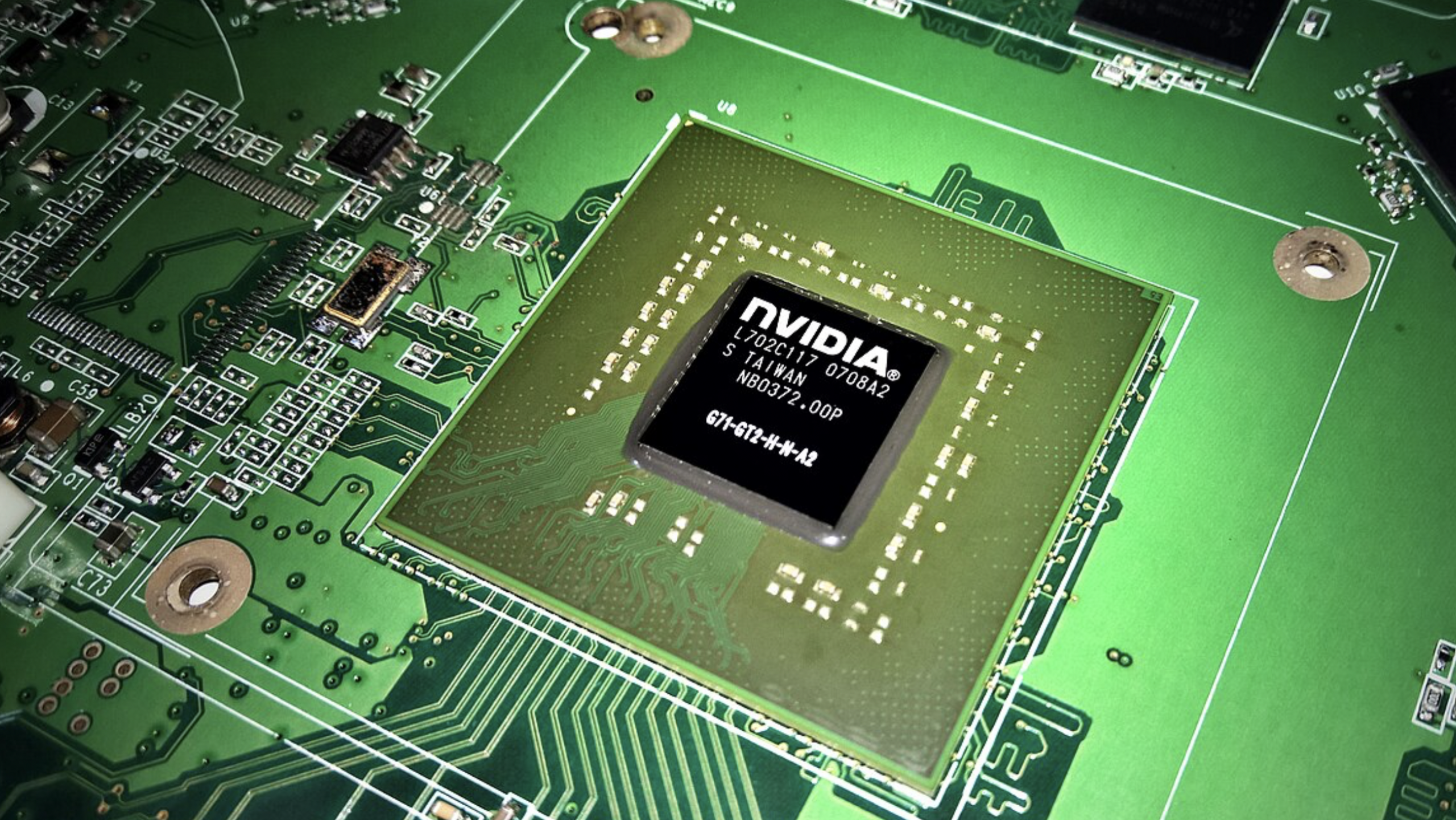

The race to build faster artificial intelligence systems is reshaping the architecture of modern data centers, as companies shift away from general-purpose processors toward specialized AI chips. These processors, designed to handle large-scale machine learning workloads, are becoming central to cloud infrastructure.

Major technology firms are investing heavily in custom silicon to reduce reliance on third-party suppliers and improve efficiency. These chips are optimized for parallel processing, allowing them to handle massive datasets required for training advanced AI models.

The shift is also driven by rising energy demands. AI workloads consume significantly more power than traditional computing tasks, pushing companies to design chips that deliver higher performance per watt. This has led to innovations in cooling systems and chip packaging.

At the same time, competition in the semiconductor industry is intensifying. New entrants and established players alike are racing to secure manufacturing capacity and develop next-generation designs, particularly as demand continues to outpace supply.

The transformation of data centers into AI-focused hubs marks a long-term shift in computing. As AI adoption expands across industries, specialized hardware is expected to play an increasingly critical role in maintaining performance and cost efficiency.