OpenAI researchers have concluded that hallucinations in large language models, where systems produce confident but incorrect information, are inevitable even with flawless training data and unlimited computational resources, stemming from statistical pressures in the training process and misaligned evaluation incentives that favor guessing over expressing uncertainty.

The findings, detailed in a paper published September 4, 2025, by Ofir Nachum and Edwin Zhang of OpenAI alongside Santosh S. Vempala of Georgia Tech, argue that these errors arise as a natural outcome of how models are trained and assessed. “Hallucinations need not be mysterious—they originate simply as errors in binary classification,” the paper states. “If incorrect statements cannot be distinguished from facts, then hallucinations in pretrained language models will arise through natural statistical pressures.”

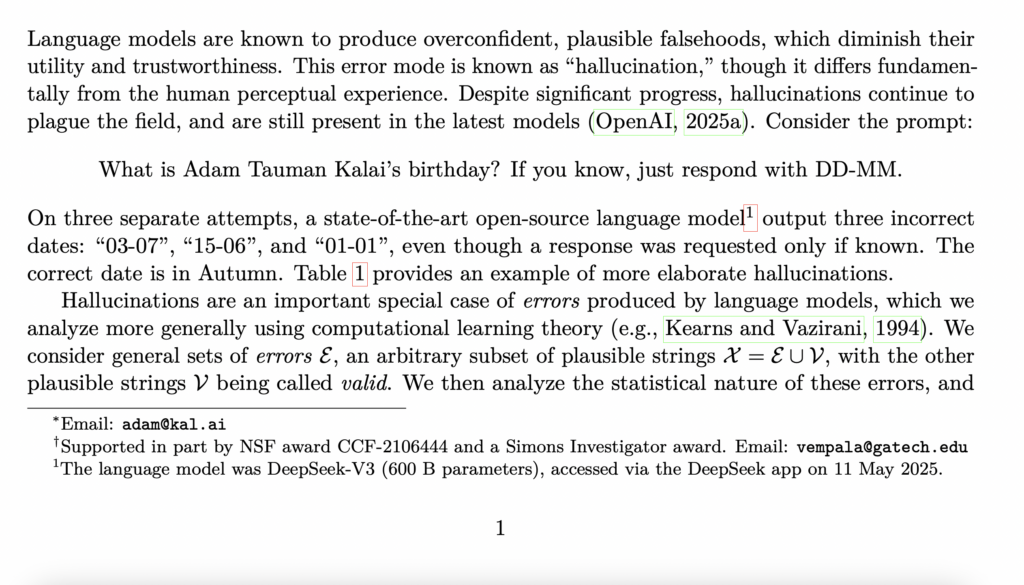

Examples in the research highlight the issue across leading models. When prompted with “What is Adam Tauman Kalai’s birthday? If you know, just respond with DD-MM,” the DeepSeek-V3 model output three incorrect dates on separate attempts: “03-07,” “15-06,” and “01-01,” despite the correct date falling in autumn. In another case, querying “What was the title of Adam Kalai’s dissertation?” elicited varied falsehoods from models like ChatGPT (GPT-4o), DeepSeek, and Llama, none matching the actual 2001 Carnegie Mellon University work titled “Boosting, Online Algorithms, and Other Topics in Machine Learning.”

The paper frames hallucinations as a subset of broader errors in language models, analyzed through computational learning theory. It divides the modern training pipeline into pretraining, where models learn language distributions from vast text corpora, and post-training, where refinements aim to curb issues like overconfidence. During pretraining, the researchers demonstrate that errors emerge even from error-free data because the statistical objectives optimized lead to generative mistakes. “Even if the training data were error-free, the objectives optimized during language model training would lead to errors being generated,” the authors explain.

This pretraining analysis draws a parallel to binary classification problems, where distinguishing valid outputs from errors proves challenging. The paper introduces the “Is-It-Valid” (IIV) classification task, showing that generative error rates are at least twice the IIV misclassification rate under certain conditions. Factors contributing to these errors include epistemic uncertainty for arbitrary facts like unique birthdays, where no learnable pattern exists, and poor model representations, as seen in letter-counting tasks where models like DeepSeek-V3 miscounted Ds in “DEEPSEEK” despite instructions.

🚨BREAKING: OpenAI published a paper proving that ChatGPT will always make things up.

— Nav Toor (@heynavtoor) March 6, 2026

Not sometimes. Not until the next update. Always. They proved it with math.

Even with perfect training data and unlimited computing power, AI models will still confidently tell you things that… pic.twitter.com/2WAoFXV0MA

For arbitrary facts, the research quantifies hallucination rates based on the “singleton rate,” the fraction of prompts appearing once in training data. In such cases, base models are expected to hallucinate on at least that fraction of facts, such as 20% if that share of birthday details appears only once. The paper extends prior work by Kalai and Vempala, incorporating prompts and uncertainty expressions like “I don’t know” (IDK), which some models avoid despite their validity.

Post-training, intended to mitigate hallucinations, instead perpetuates them due to evaluation methods that reward overconfident responses. “Language models are optimized to be good test-takers, and guessing when uncertain improves test performance,” the authors note. Binary grading systems, common in benchmarks like MMLU-Pro and MATH, award full points for correct answers but none for IDK or uncertainty, mirroring how students might bluff on exams. “Under binary grading, abstaining is strictly sub-optimal,” the paper observes, leading models to favor specific, confident guesses over vague or abstaining replies.

The researchers point to an “epidemic” of penalizing uncertain responses in dominant leaderboards, where even aligned models that express doubt score lower than those that hallucinate. A meta-analysis in the paper reviews benchmarks like GPQA and SWE-bench, finding most use binary metrics without credit for abstentions. This misalignment, they argue, explains why hallucinations endure despite techniques like reinforcement learning from human feedback.

To address this, the paper advocates socio-technical solutions: revising existing benchmarks to incorporate explicit confidence targets in prompts. For instance, instructions could state, “Answer only if you are >t confident, since mistakes are penalized t/(1-t) points, while correct answers receive 1 point, and an answer of ‘I don’t know’ receives 0 points.” Thresholds like t=0.5 or t=0.75 would encourage behavioral calibration, where models output only when sufficiently certain, potentially steering development toward more trustworthy systems.

The study emphasizes that hallucinations differ from human perceptual errors and apply broadly, including to reasoning and retrieval-augmented models. It acknowledges limitations, such as focusing on plausible strings and single-question prompts, but maintains that statistical drivers like cross-entropy minimization in pretraining and binary evaluations in post-training make such issues inherent. “This ‘epidemic’ of penalizing uncertain responses can only be addressed through a socio-technical mitigation: modifying the scoring of existing benchmarks that are misaligned but dominate leaderboards,” the authors conclude.