Tech executives claim their massive AI models are on the verge of achieving human-level intelligence, but leading researchers argue that current large language models lack the fundamental capabilities required for true intelligence and may never develop them.

The Four Critical Shortcomings

Yann LeCun, Meta’s Chief AI Scientist and Turing Award winner, outlined four essential characteristics that large language models fundamentally cannot achieve in their current architecture during an interview on the Lex Fridman podcast.

“There is a number of characteristics of intelligent behavior,” LeCun explained. “For example, the capacity to understand the world, understand the physical world, the ability to remember and retrieve things, persistent memory, the ability to reason and the ability to plan. Those are four essential characteristic of intelligent systems or entities, humans, animals.”

His assessment: “LLMs can do none of those, or they can only do them in a very primitive way.”

Understanding the Physical World

LeCun argues that intelligence requires grounding in reality, and language alone provides insufficient information to build a genuine understanding of the world.

“Language is a very approximate representation of percepts and mental models,” he said. “There’s a lot of tasks that we accomplish where we manipulate a mental model of the situation at hand, and that has nothing to do with language. Most of our knowledge is derived from interaction with the physical world.”

He illustrated the vast difference in information available through vision versus language: A four-year-old child absorbs roughly 10 to the 15th bytes of information through visual input over 16,000 waking hours. By contrast, large language models are trained on about two times 10 to the 13th bytes—the equivalent of 170,000 years of reading, yet still 50 times less information than what a preschooler processes through sight.

This means that despite processing enormous amounts of text, LLMs lack the rich, sensory understanding of reality that even young children possess.

Memory Limitations

Current LLMs lack persistent memory in any meaningful sense. While some systems like ChatGPT have added basic memory features, these function more like programmable rules than true memory.

Human memory integrates into our understanding of the world in subtle ways, helping us predict outcomes and understand contexts without being explicitly told. LLM memory, by contrast, stores isolated facts without the deeper integration that characterizes human cognition.

Researchers from Cisco and the University of Texas acknowledged this problem in an October 2023 paper: “As we venture closer to creating Artificial General Intelligence systems, we recognize the need to supplement LLMs with long-term memory to overcome the context window limitation and more importantly, to create a foundation for sustained reasoning, cumulative learning and long-term user interaction.”

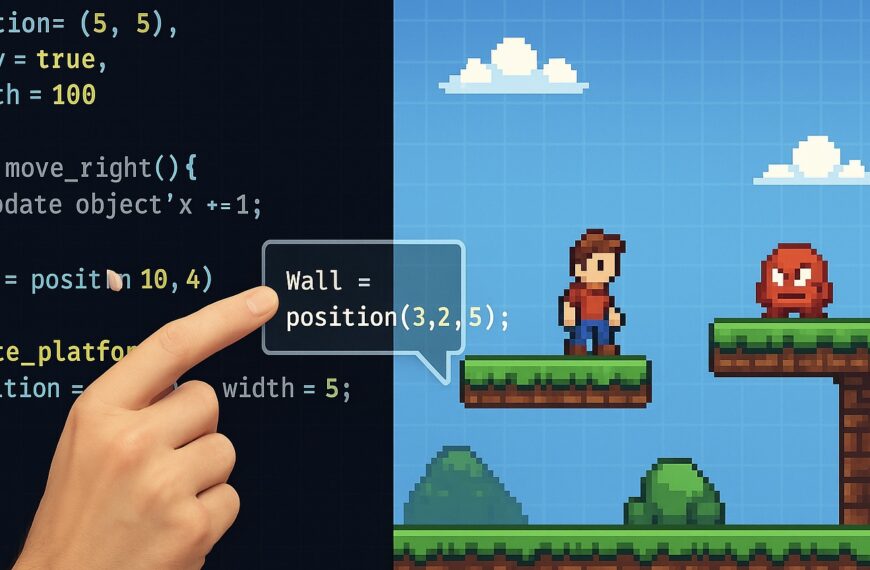

Faulty Reasoning

Despite their impressive performance on many tasks, LLMs do not actually reason—they mimic reasoning patterns found in their training data.

A meta-analysis of academic studies from the Munich Center for Machine Learning found that “LLMs tend to rely on surface-level patterns and correlations in their training data, rather than on genuine reasoning abilities.”

The research documented three types of failures:

Inconsistent logic: Studies showed that when premises are presented in a different order—without changing the underlying logic—models like ChatGPT and GPT-4 encounter significant difficulties, even though the reasoning should remain identical.

Inability to maintain consistency: Researchers from NYU found that GPT models “exhibit poor consistency rates” when asked to maintain logical consistency across multiple steps of reasoning.

Susceptibility to bad arguments: Ohio State University researchers discovered that despite generating correct solutions initially, LLMs “cannot maintain their beliefs in truth for a significant portion of examples when challenged by oftentimes absurdly invalid arguments.”

In other words, an LLM that correctly solves a math problem can be convinced it was wrong by a user presenting faulty logic.

Planning Failures

Planning requires combining reasoning, memory, and a world model to determine the sequence of tasks needed to accomplish a goal. Because LLMs struggle with all three prerequisites, they cannot effectively plan.

Researchers from Princeton University and Microsoft Research confirmed that while LLMs can sometimes perform individual planning functions in isolation, they “struggle to autonomously coordinate them in the service of a goal.”

Arizona State University researchers concluded in February 2024 that “auto-regressive LLMs cannot, by themselves, do planning or self-verification.”

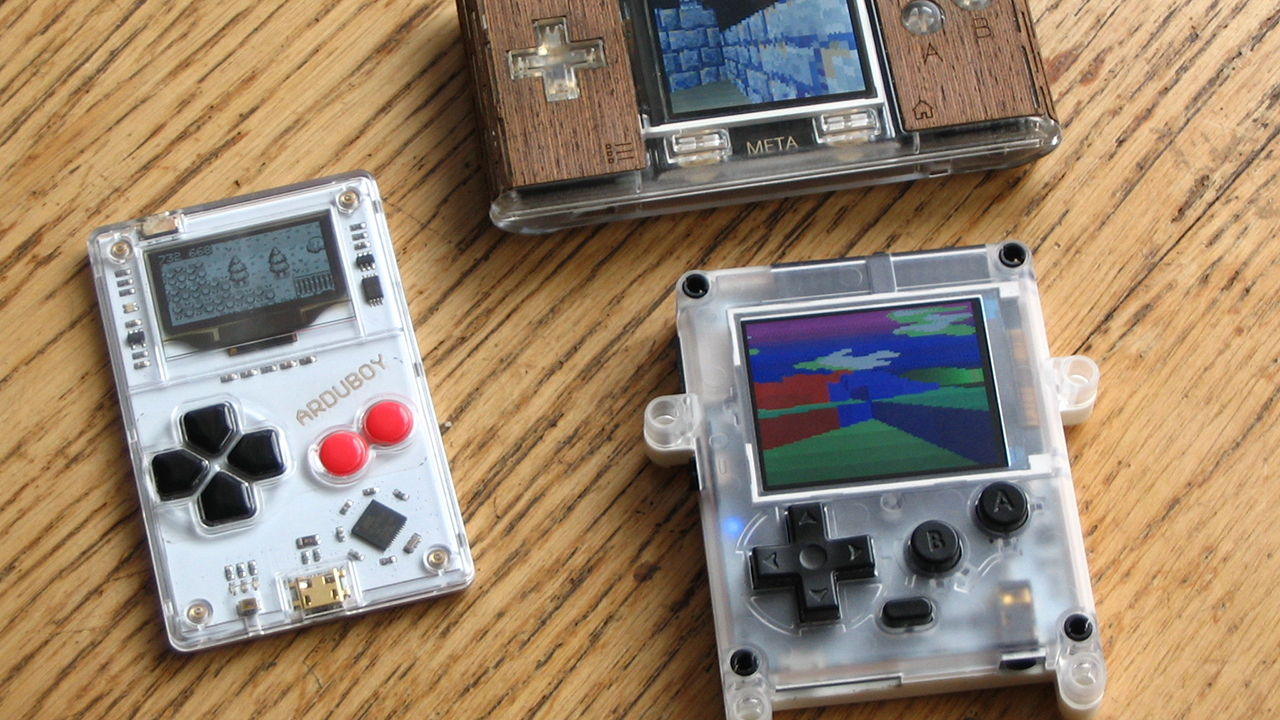

The Scaling Problem

Beyond these architectural limitations, the AI industry faces an economic crisis. The strategy of simply making models bigger—adding more parameters, training data, and computing power—has hit a wall.

The Information reported that OpenAI’s upcoming Orion model shows noticeably less improvement over GPT-4 than GPT-4 showed over GPT-3. In some areas like coding, there may be no improvement at all.

Gary Marcus, a cognitive scientist and AI researcher, warns that this threatens the entire industry. “Sky high valuation of companies like OpenAI and Microsoft are largely based on the notion that LLMs will, with continued scaling, become artificial general intelligence,” Marcus wrote. “As I have always warned, that’s just a fantasy.”

The economics are grim: training runs for large models cost tens of millions of dollars and require hundreds of AI chips running for months. Companies have already scraped virtually all available public data from the internet for training.

“LLMs such as they are, will become a commodity; price wars will keep revenue low,” Marcus predicts. “Given the cost of chips, profits will be elusive. When everyone realizes this, the financial bubble may burst quickly.”

What This Means

LeCun emphasizes that his critique doesn’t mean LLMs are useless. “That is not to say that autoregressive LLMs are not useful, they’re certainly useful, that they’re not interesting, that we can’t build a whole ecosystem of applications around them. Of course we can.”

However, he adds, “as a path towards human level intelligence, they’re missing essential components.”

The current generation of LLMs can provide tremendous value for text generation, summarization, translation, and many other tasks. But expecting them to achieve artificial general intelligence through incremental improvements may be, as Marcus put it, just a fantasy.