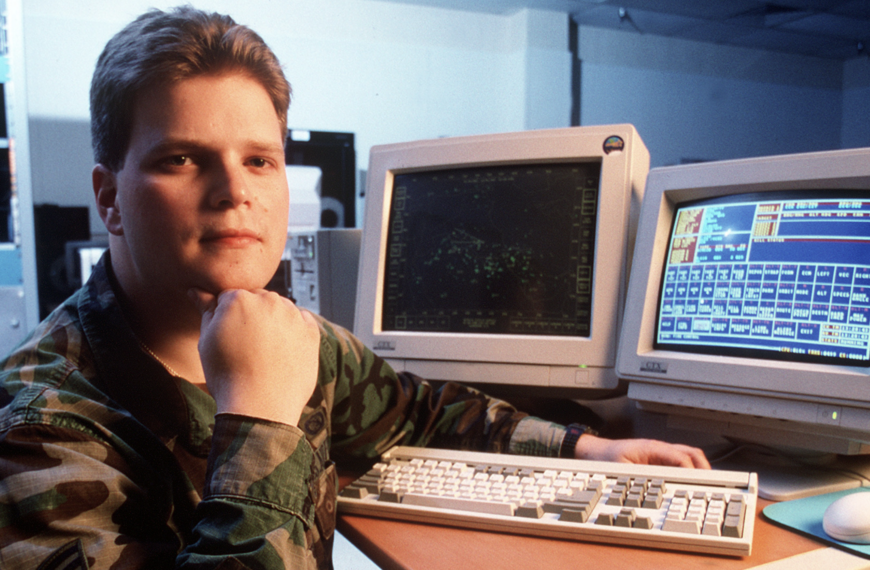

At the American Museum of Natural History on March 26, 2026, Neil deGrasse Tyson, Frederick P. Rose Director of the Hayden Planetarium, called for an international treaty to ban superintelligent AI, warning that unchecked development could pose existential risks.

Speaking at the 2026 Isaac Asimov Memorial Debate, Tyson drew parallels between the risks of superintelligent AI and the Cold War, emphasizing the role of diplomacy and global cooperation. “You know what kinda ended the Cold War? A lot of things contributed, but you know what mattered? The realization that if you launch nuclear weapons, intercontinental ballistic missiles, from the Soviet Union into the Western Hemisphere, and we see that, and we retaliate, everybody dies,” Tyson said.

Neil DeGrasse Tyson calls for an international treaty to ban superintelligence: "That branch of AI is lethal. We've got do something about that. Nobody should build it. And everyone needs to agree to that by treaty. Treaties are not perfect, but they are the best we have as… pic.twitter.com/AvM9dYVPfw

— Anonymous (@YourAnonNews) March 26, 2026

He continued, referencing the concept of Mutual Assured Destruction (MAD): “We had an acronym for it, MAD. That’s what brought people to the table, and say, ‘This is insane. We have to regulate it. We have to reduce the stockpiling because preserving our species is what matters here.’”

Tyson then turned to the AI landscape, highlighting the growing capabilities of machines to surpass human intelligence. “That branch of AI is lethal. We gotta do something about that. No one should build it, and everyone needs to agree to that by treaty,” he said. He stressed that while treaties are imperfect, they remain humanity’s best tool for mitigating global-scale risks.

The debate convened a panel of experts across disciplines, including Latanya Sweeney of Harvard University, Kate Crawford of the University of Southern California, Chris Callison-Burch of the University of Pennsylvania, Cynthia Rush of Columbia University, Nate Soares of the Machine Intelligence Research Institute, and Eric Schmidt, former CEO and Chairman of Google. Together, they discussed AI’s impact on scientific discovery, national security, data governance, and the ethical frameworks needed to guide future development responsibly.

Tyson’s remarks underscored what he thinks is an urgent need to address superintelligent AI now, rather than react after technologies emerge that could outpace human control. “People will come to the table and say, ‘Yeah, keep the rest of the AI going. We got new medicines and new understandings of our physiology and new technologies that help us get smarter and healthier. Yes. But that branch of AI is lethal,’” Tyson said, advocating for global consensus on the issue.